Abstract

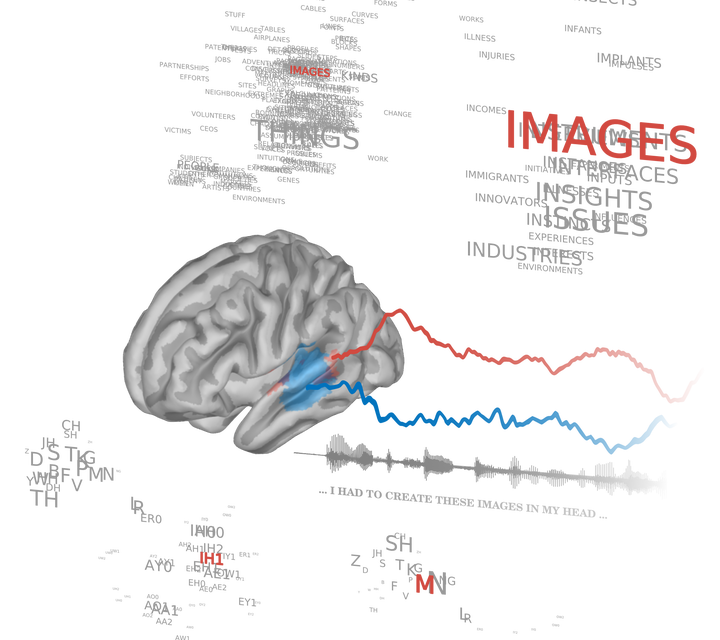

During speech listening, the brain could use contextual predictions to optimize sensory sampling and processing. We asked if such predictive processing is organized dynamically into separate oscillatory timescales. We trained a neural network that uses context to predict speech at the phoneme level. Using this model, we estimated contextual uncertainty and surprise of natural speech as factors to explain neurophysiological activity in human listeners. We show, first, that speech-related activity is hierarchically organized into two timescales: fast responses (theta: 4–10 Hz), restricted to early auditory regions, and slow responses (delta: 0.5–4 Hz), dominating in downstream auditory regions. Neural activity in these bands is selectively modulated by predictions: the gain of early theta responses varies according to the contextual uncertainty of speech, while later delta responses are selective to surprising speech inputs. We conclude that theta sensory sampling is tuned to maximize expected information gain, while delta encodes only non-redundant information.